- Stereo 3D

- Usage

Usage

Perceiving depth when using the module

Using the Stereo 3D module effectively starts with choosing a depth perception method that is most comfortable or convenient.

By default, the Side-by-side Right/Left (Cross) Mode is used, which allows for seeing 3D using the cross-viewing technique. If you are more comfortable with the parallel-viewing technique, you may select Side-by-side Left/Right (Parallel). The benefits of the two aforementioned techniques is that they do not require any visual aids, while keeping coloring intact. The downside of these methods, is that the entire image must fit on half of the screen. E.g. zooming in breaks the 3D effect.

If you have a pair of red/cyan filter glasses, you may wish to use one of the three anaglyph Modes. The two monochromatic anaglyph modes render anaglyphs for printing and viewing on a screen. The screen-specific anaglyph will exhibit reduced cross-talk (aka "ghosting") in most cases. An "optimized" Color mode is also available, which retains some coloring. Visual spectrum astrophotography tends to contain few colors that are retained in this way, however narrowband composites can benefit. Finally, a Depth Map mode is available to inspect (or save) the z-axis depth information that was generated by the current model.

Modelling and synthesizing depth information for astrophotography

The depth information generated by the Stereo 3D module is entirely synthetic and should not be ascribed any scientific accuracy. However, the modelling performed by the module is based on a number of assumptions that tend to hold true for many Deep Space Objects and can hence be used for making educated guesses about objects. Fundamentally, these assumptions are;

- Dark detail is visible by virtue of a brighter background. Dust clouds and Bok globules are good examples of matter obstructing other matter and hence being in the foreground of the matter they are obstructing.

- Brighter areas (for example due to emissions or reflection nebulosity) correlate well with voluminous areas.

- Bright objects within brighter areas tend to drive the (bright) emissions in their immediate neighborhoods. Therefore these objects should preferably be shown as embedded within these bright areas.

- Bright objects (such as bright blue O and B-class stars), drive emissions in their immediate neighborhood and tend to generate cavities due to radiation pressure.

- Stark edges such as shockfronts tend to speed away from their origin. Therefore these objects should perferably be shown as veering off.

Tweaking the model

Depth information is created between two planes; the near plane (closest to the viewer) and the far plane (furthest away from the viewer). The distance between the two planes is governed by the 'Depth' parameter.

The 'Protrude' parameter governs the location of the near and far planes with respect to distance from the viewer. At 50% protrusion, half the scene will be going into the screen (or print), while the other half will appear to 'jut out' of the screen (or print). At 100% protrusion, the entire scene will appear to float in front of the screen (or print). At 0% protrusion the entire scene will appear to be inside the screen (or print).

The 'Luma to Volume' parameter controls whether large bright or dark structures should be given volume. Objects that primarily stand out against a bright background (for example, the iconic Hubble 'Pillars of Creation' image) benefit from a shadow dominant setting. Conversely, objects that stand out against a dark background (for example M20) benefit from a highlight dominant setting.

The 'Simple L to Depth' parameter naively maps a measure of brightness directly to depth information. This a somewhat crude tool and using the 'Luma to Volume' parameter is often sufficient.

The 'Highlight Embedding' parameter controls how much bright highlights should be embedded within larger structures and context. Bright objects such as energetic stars are often the cause of the visible emissions around them. Given they radiate in all directions, embedding them within these emission areas is the most logical course of action.

The 'Structure Embedding' parameter controls how small-scale structures should behave in the presence of larger scale structures. At low values for this parameter, they tend to float in front of the larger scale structures. At higher values, smaller scale structures tend to intersect larger scale structures more often.

The 'Min. Structure Size' parameter controls the smallest detail size the module may use to construct a model. Smaller values generate models suitable for widefields with small scale detail. Larger values may yield more plausible results for narrowfields with many larger scale structures. Please note that larger values may cause the model to take longer to compute.

The 'Intricacy' parameter controls how much smaller scale detail should prevail over larger scale detail. Higher values will yield models that show more fine, small scale changes in undulation and depth change. Lower values leave more of the depth changes to the larger scale structures.

The 'Depth Non-linearity' parameter controls how matter is distributed across the depth field. Values higher than 1.0 progressively skew detail distribution towards the near plane. Values lower than 1.0 progressively skew detail distribution towards the far plane.

Exporting to 3D-capable media

Besides rendering images as anaglyphs or side-by-side 3D stereo content, the Stereo 3D module is also able to generate Facebook 3D photos, as well as interactive self-contained 2.5D and Virtual Reality experiences.

Standalone Virtual Reality experience

The 'WebVR' button in the module exports your image as a standalone HTML file. This file can be viewed locally in your webbrowser, or it can be hosted online.

It renders your image as an immersive VR experience, with a large screen wrapping around the viewer. The VR experience can be viewed in most popular headsets, including HTC Vive, Oculus, Windows Mixed Reality, GearVR, Google Day Dream and even sub-$5 Google Cardboard devices.

To view an experience, put it in an accessible location (locally or online) and launch it from a WebVR/XR capable browser.

Please note that landscape images tend to be more immersive.

Standalone interactive 2.5D web viewer

The 'Web2.5D' button in the module exports your image as a standalone HTML file. This file can be viewed locally in your webbrowser, or it can be hosted online.

Depth is conveyed by a subtle, configurable, bobbing motion. This motion subtly changes the viewing angle to reveal more or less of the object, depending on the angle. The motion is configurable both by you and the viewer in both X and Y axes. The motion can also be configured to be mapped to mouse movements.

A so called 'depth pulse' can be sent into the image, which travels through the image from the near plane to the far plane, highlighting pixels of equal depth as it travels. The 'depth pulse' is useful to re-calibrate the viewer's persepective if background and foreground appear swapped.

Hosting the file online, allows for embedding the image as an IFRAME. The following is an example of the HTML required to insert an image in any website;

<iframe scrolling="auto" marginheight="0" marginwidth="0" style="border:none;max-width:100%;" src="https://download.startools.org/pillars_stereo.html?spdx=4&spdy=3&caption=StarTools%20exports%20self-contained,%20embeddable%20web%20content%20like%20this%20scene.%20This%20image%20was%20created%20in%20seconds.%20Configurable,%20subtle%20movement%20helps%20with%20conveying%20depth." frameborder="0"></iframe>

The following parameters can be set via the url;

- modex: 0=no movement, 1=positive sine wave modulation, 2=negative sine wave modulation, 3=positive sine wave modulation, 4=negative sine wave, 5=jump 3 frames only (left, middle, right), 6=mouse control

- modey: 0=no movement, 1=positive sine wave modulation, 2=negative sine wave modulation, 3=positive sine wave modulation, 4=negative sine wave, 5=mouse control

- spdx: speed of x-axis motion, range 1-5

- spdy: speed of y-axis motion, range 1-5

- caption: caption for the image

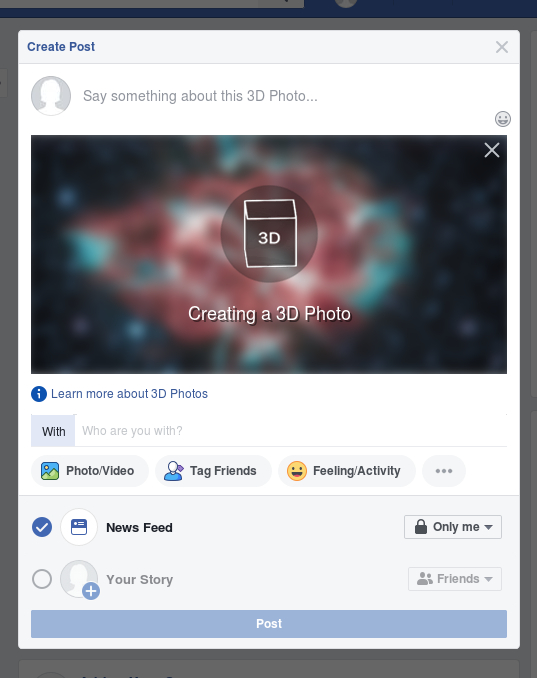

Facebook 3d photos

The Stereo 3D module is able to export your images for use with Facebook's 3D photo feature.

The 'Facebook' button in the module saves your image as dual JPEGs; one image that ends in '.jpg' and one image that ends in '_depth.jpg' Uploading these images as photos at the same time will see Facebook detect and use the two images to generate a 3D photo.

Please note that due Facebook's algorithm being designed for terrestrial photography, the 3D reconstruction may be a bit odd in places with artifacts appearing and stars detaching from their halos. Nevertheless the result can look quite pleasing when simply browsing past the image in a Facebook feed.

3D-capable TVs and projectors

TVs and projectors that are 3D-ready can - at minimum - usually be configured to render side-by-side images as 3D. Please consult your TV or projector's manual or in-built menu to access the correct settings.

You may also be interested in...

- The power of temporal 3D (X, Y, t) signal processing (under Tracking is signal preservation)

And all this is just what Tracking does for the deconvolution module.

- Inter-channel Entropy-driven Detail Enhancement (under Features & Documentation)

The Entropy module works by evaluating entropy (a measure of "busyness" or "randomness") as a proxy for detail.

- Heal: Unwanted Feature Removal (under Features & Documentation)

The Heal module was created to provide a means of substituting unwanted pixels in an neutral way.

- Usage (under Entropy)

Overdriving the 'Strength' parameter too much may make channel transitions too visible.

- Presets (under Usage)

Please note the specific blend's parameters/factors under the 'Matrix' parameter.